Mastering Javascript Text to Speech API - A Guide

Unlocking Potential - Javascript Text to Speech API Explored

Unlocking the potential of Javascript text to speech API begins with understanding its core features. This API allows developers to convert text into audible speech, a feature that can be integrated into various applications to enhance user experience. A text to speech API Javascript example could be a reading app that converts written content into spoken words, providing an alternative way for users to consume content. This feature is particularly beneficial for visually impaired users, making digital content more accessible. The versatility of the Javascript text to speech API is demonstrated in the wide range of applications it can be integrated into, from reading apps to navigation systems, and its ability to convert text into multiple languages.

Delving deeper into the advantages of the Javascript text to speech API, it becomes evident that this technology is not only beneficial for end-users but also for developers. The API is designed to be easy to use, with comprehensive documentation and a text to speech API Javascript tutorial available to assist in the integration process. This ease of use reduces the time and effort required for developers to implement the feature, allowing them to focus on other aspects of their application. Furthermore, the API supports a wide range of languages and voices, providing developers with the flexibility to customize the speech output to suit their specific needs.

The benefits of the Javascript text to speech API extend beyond its features and advantages. By integrating this technology into their applications, businesses can enhance user experience, improve accessibility, and potentially increase user engagement and retention. For example, an ecommerce platform that integrates a text to speech feature can provide a more interactive shopping experience for its users, potentially leading to increased sales. Moreover, the API's support for multiple languages can help businesses reach a wider audience, further enhancing their market reach. Thus, the Javascript text to speech API is not just a tool for developers, but a strategic asset for businesses.

| Topics | Discussions |

|---|---|

| Comprehensive Glossary: Understanding Key Terms in TTS Tech | Key terms and definitions related to text-to-speech (TTS) technology. |

| A High-Level Look at Utilizing Javascript Text to Speech API | An overview of using the Javascript Text to Speech API for TTS functionality. |

| Pros of Implementing Google Text to Speech API in Javascript | Advantages and benefits of using the Google Text to Speech API in Javascript. |

| Top Feature Highlights of the Javascript Text to Speech API | Notable features and capabilities of the Javascript Text to Speech API. |

| Exploring Use Cases for Google Text to Speech API in Javascript | Real-world applications and scenarios where the Google Text to Speech API can be utilized in Javascript. |

| Latest Research & Development Innovations in TTS Technology | Recent advancements and innovations in text-to-speech technology. |

| Rounding Up Essential Features of Javascript Text to Speech API | A comprehensive overview of the essential features offered by the Javascript Text to Speech API. |

| Unique Unreal Speech Advantages as a Javascript Text to Speech API | Distinct advantages and benefits of using Unreal Speech as a Javascript Text to Speech API. |

| FAQs: Navigating the Intricacies of Javascript Text to Speech API | Frequently asked questions and answers regarding the usage and intricacies of the Javascript Text to Speech API. |

| Supplemental Resources for Mastering Javascript Text to Speech API | Additional resources and references for further learning and mastering the Javascript Text to Speech API. |

Comprehensive Glossary: Understanding Key Terms in TTS Tech

API (Application Programming Interface): An API is a set of rules and protocols that allows different software applications to communicate with each other. In the context of Javascript Text to Speech, an API would enable the integration of text-to-speech functionality into a web application.

Javascript: Javascript is a high-level, interpreted programming language commonly used to create interactive effects within web browsers. It is an essential part of web applications, including those that utilize text-to-speech technology.

Text-to-Speech (TTS): Text-to-Speech is a form of assistive technology that reads digital text aloud. In the context of Javascript, TTS can be implemented via an API to enhance the accessibility and user experience of a web application.

Speech Synthesis: Speech Synthesis is the artificial production of human speech, often used in conjunction with text-to-speech technology. It allows a system to generate spoken language based on written input.

Web Speech API: The Web Speech API is a web-based API for voice data conversion. It includes two components: SpeechSynthesis (Text-to-Speech), and SpeechRecognition (Asynchronous Speech Recognition).

SpeechRecognition: SpeechRecognition is a part of the Web Speech API that enables the recognition and translation of spoken language into written text.

Utterance: In the context of the Speech Synthesis API, an utterance refers to a piece of text that is to be synthesized into speech.

Voices: Voices, in the context of text-to-speech, refer to the different types of synthesized voices that can be used to read out the text. These can vary in accent, pitch, speed, and language.

Asynchronous: Asynchronous refers to operations that do not block other operations from executing until they have finished. In the context of the Web Speech API, it means that speech recognition or synthesis can occur while other operations continue.

A High-Level Look at Utilizing Javascript Text to Speech API

As businesses become increasingly aware of the potential of TTS technology, a common problem arises—how to effectively implement it. One solution lies in the utilization of Javascript Text to Speech API. This powerful tool allows developers to convert text into audible speech directly within a web application, enhancing user experience and accessibility. Leveraging this API requires a deep understanding of Javascript and its associated libraries, as well as knowledge of speech synthesis methods. It's crucial to position this technology correctly within a business's digital strategy, ensuring it aligns with overall objectives and enhances user engagement.

Pros of Implementing Google Text to Speech API in Javascript

Implementing Google's Text to Speech API in Javascript presents a unique solution to a prevalent issue—enhancing user interaction and accessibility in web applications. This robust tool transforms text into audible speech, thereby enriching the user experience. However, its effective deployment necessitates a profound comprehension of Javascript and its libraries, coupled with an understanding of speech synthesis techniques. Aligning this technology with a company's digital strategy is paramount, as it ensures the achievement of overarching objectives and boosts user engagement.

Enhancing social development with Javascript text to speech API benefits

Exploiting Javascript's TTS API— a feature that converts written text into audible speech—offers significant advantages in the realm of social development. It enhances user interaction, accessibility, and engagement in web applications, thereby providing a more immersive and inclusive digital experience. The benefits of this technology are manifold, including improved user satisfaction, increased retention rates, and a broader reach to audiences with varying abilities. However, leveraging this tool requires a deep understanding of Javascript and its libraries, as well as speech synthesis techniques. Therefore, integrating this API into a company's digital strategy is crucial for achieving business objectives and maximizing user engagement.

Business and ecommerce growth through Javascript text to speech API implementation

Recognizing the potential of Javascript's Text to Speech API—a tool that transmutes text into spoken language—can be a game-changer for businesses and ecommerce platforms. This technology, when adeptly implemented, can revolutionize user interaction, accessibility, and engagement, thereby fostering a more inclusive digital environment. It can lead to heightened user satisfaction, bolstered retention rates, and an expanded audience reach, including those with diverse abilities. However, harnessing the power of this tool necessitates a profound comprehension of Javascript and its libraries, along with speech synthesis methodologies. Hence, the strategic integration of this API into a business's digital blueprint is paramount for realizing business goals and optimizing user engagement.

Law and paralegal sectors' efficiency boost with Javascript text to speech API

Law and paralegal sectors face a significant problem—inefficiency in document processing and client communication. This issue agitates the workflow, causing delays and potential errors. A solution lies in the integration of Javascript's Text to Speech API. This technology, when skillfully incorporated, can transform text into spoken language, streamlining document review and enhancing client interaction. However, this requires a deep understanding of Javascript and its libraries, as well as speech synthesis methodologies. Therefore, strategic implementation of this API is crucial for boosting efficiency and optimizing client engagement in these sectors.

Scientific research and engineering advancements using Javascript text to speech API

Scientific research and engineering fields are increasingly aware of the potential of Javascript's Text to Speech API. This awareness stems from a pressing problem—inefficiency in data interpretation and communication. By leveraging the power of this API, researchers and engineers can convert complex textual data into audible speech, enhancing comprehension and facilitating efficient data analysis. However, this necessitates a profound grasp of Javascript, its libraries, and speech synthesis techniques. Positioning this API strategically within research and engineering workflows can significantly augment data interpretation, thereby fostering innovation and driving advancements in these fields.

Medical research and healthcare transformation via Javascript text to speech API

Unveiling a transformative tool in the realm of medical research and healthcare—Javascript's Text to Speech API. This feature-rich technology, when adeptly harnessed, morphs intricate textual data into comprehensible speech, thereby streamlining data interpretation processes. The advantage lies in its ability to enhance data comprehension, a critical factor in the fast-paced, data-driven world of healthcare. The benefit is twofold—on one hand, it accelerates data analysis, and on the other, it fosters innovation by enabling researchers to focus on discovery and development rather than grappling with data interpretation. A deep understanding of Javascript, its libraries, and speech synthesis techniques is, however, a prerequisite to fully exploit this API's potential.

Education and training elevation using Javascript text to speech API

Delving into the realm of education and training, Javascript's Text to Speech API emerges as a potent tool for elevating learning experiences. This advanced technology, when skillfully implemented, transforms complex textual content into audible speech—facilitating a more immersive, engaging learning environment. It fosters a deeper understanding of subject matter, particularly in technical fields where comprehension can be challenging. Moreover, it empowers educators to deliver content more effectively, enhancing student engagement and comprehension. However, to fully leverage this API's capabilities, a profound knowledge of Javascript, its libraries, and speech synthesis methodologies is essential.

Finance and corporate management gains with Javascript text to speech API integration

Unveiling the potential of Javascript's Text to Speech API in the sphere of finance and corporate management, it becomes evident that this technology is a game-changer. By converting intricate financial data and corporate reports into audible speech, it simplifies the comprehension of complex information—enhancing decision-making processes. It also streamlines communication within organizations, fostering a more efficient, productive work environment. However, to harness the full potential of this API, a deep understanding of Javascript, its libraries, and speech synthesis methodologies is crucial. This technology, when adeptly integrated, can revolutionize the way businesses operate, driving growth and profitability.

Government operations streamlined by Javascript text to speech API adoption

Adopting Javascript's Text to Speech API in government operations presents a transformative feature—its ability to convert complex legislative documents into audible speech. This advantage fosters a more efficient comprehension of intricate information, thereby expediting decision-making processes. The benefit is a streamlined operation, enhancing productivity and efficiency within governmental departments. However, to fully leverage this technology, a comprehensive understanding of Javascript, its libraries, and speech synthesis methodologies is imperative. When proficiently integrated, this API can revolutionize governmental operations, promoting transparency and accountability.

Industrial manufacturing and supply chains optimization with Javascript text to speech API

Industrial manufacturing and supply chains—once considered static, linear systems—are now experiencing a paradigm shift with the integration of Javascript's Text to Speech API. This advanced technology, when applied to intricate manufacturing processes, can transform complex procedural instructions into audible speech, thereby enhancing comprehension and reducing errors. It also optimizes supply chain management by converting voluminous data into digestible, audible information, facilitating swift decision-making. However, harnessing the full potential of this API necessitates a profound understanding of Javascript, its libraries, and speech synthesis methodologies. When adeptly implemented, it can catalyze a significant leap in operational efficiency, productivity, and accuracy across industrial manufacturing and supply chains.

Top Feature Highlights of the Javascript Text to Speech API

Recognizing the transformative potential of Javascript's Text to Speech API, it's crucial to highlight its key features. This API's core strength lies in its ability to convert intricate textual data into audible speech—enhancing comprehension and minimizing errors in complex processes. Furthermore, it excels in transforming extensive supply chain data into easily digestible, audible information—enabling swift, informed decision-making. However, to fully leverage this technology, a deep understanding of Javascript, its libraries, and speech synthesis methodologies is essential. When skillfully deployed, this API can drive significant improvements in operational efficiency, productivity, and accuracy across various sectors.

Deployment simplicity: A key feature of Javascript text to speech API

Amid the myriad of Javascript's Text to Speech API features, one stands out—deployment simplicity. This API, with its intuitive design, allows for seamless integration into existing systems, even those handling complex textual data. It transforms this data into audible speech, thereby enhancing comprehension and reducing errors. However, this simplicity does not compromise its ability to handle extensive supply chain data, converting it into easily understandable, audible information. This feature, when utilized effectively, can lead to significant improvements in operational efficiency, productivity, and accuracy across various sectors.

Javascript text to speech API's compliance with legal regulations: A top feature highlight

Highlighting a key feature of Javascript's Text to Speech API—its adherence to legal regulations—provides a unique perspective. This API, beyond its user-friendly design and data-to-speech conversion capabilities, also ensures compliance with pertinent laws. It meticulously adheres to data privacy standards, intellectual property rights, and other relevant legal frameworks. This compliance, coupled with its ability to process extensive supply chain data, makes it a robust tool for businesses seeking to enhance operational efficiency while maintaining legal integrity.

Cost-effectiveness and versatility in Javascript text to speech API features

Grasping the cost-effectiveness and versatility of Javascript's Text to Speech API is crucial for businesses. This API, beyond its compliance with legal regulations, offers a cost-effective solution for data-to-speech conversion—eliminating the need for expensive third-party services. Its versatility is evident in its ability to process a wide range of data types, from supply chain information to customer feedback. Furthermore, its adaptability to various business models and its compatibility with different platforms make it a versatile tool for businesses. This combination of cost-effectiveness and versatility makes Javascript's Text to Speech API an invaluable asset for businesses striving for operational efficiency and legal integrity.

Wider market reach through Javascript text to speech API's innovative features

Recognizing the expansive market reach potential of Javascript's Text to Speech API is paramount for industry leaders. This innovative tool, beyond its cost-efficiency, provides a unique solution for transforming diverse data into audible content—eradicating reliance on costly external services. Its robustness is demonstrated in its capacity to handle a myriad of data formats, from intricate supply chain details to nuanced customer insights. Moreover, its flexibility across various business structures and its interoperability with multiple platforms underscore its adaptability. This fusion of cost-effectiveness, versatility, and adaptability positions Javascript's Text to Speech API as a vital instrument for businesses aiming for operational excellence and regulatory compliance.

User-friendliness: A standout characteristic of Javascript text to speech API

Understanding the user-friendliness of Javascript's Text to Speech API is crucial for tech-savvy professionals. This advanced tool, renowned for its simplicity, offers an intuitive interface for converting complex data into audible content—eliminating the need for expensive third-party services. Its strength lies in its ability to process a wide range of data types, from intricate logistics data to subtle consumer feedback. Furthermore, its compatibility with diverse business models and seamless integration with various platforms highlight its versatility. This blend of simplicity, flexibility, and adaptability makes Javascript's Text to Speech API an indispensable asset for businesses striving for operational efficiency and regulatory adherence.

Sustainability-focused features in Javascript text to speech API

Delving into the sustainability-focused features of Javascript's Text to Speech API, one uncovers a trove of technical advantages. This API, with its robust architecture, enables efficient data processing—significantly reducing energy consumption and carbon footprint. Its lightweight design ensures minimal server load, promoting sustainable digital practices. Moreover, its ability to handle diverse data types, from granular customer insights to complex operational data, underscores its versatility. Thus, Javascript's Text to Speech API not only bolsters operational efficiency but also fosters an eco-friendly digital environment—making it a vital tool for businesses committed to sustainability.

Scalability excellence in Javascript text to speech API feature highlights

Scaling challenges in TTS applications can be daunting—particularly when dealing with Javascript's Text to Speech API. The problem lies in the API's ability to handle a surge in data processing demands without compromising performance or sustainability. This issue can agitate developers, as it threatens the efficiency and eco-friendliness of their digital solutions. However, Javascript's Text to Speech API excels in scalability—its robust architecture and lightweight design allow for efficient data processing, minimal server load, and versatility in handling diverse data types. Thus, it offers a solution that not only enhances operational efficiency but also promotes sustainable digital practices.

Exploring Use Cases for Google Text to Speech API in Javascript

Google's Text to Speech API in Javascript—characterized by its robust, scalable architecture—presents a compelling solution for handling data processing demands. Its lightweight design, a notable feature, ensures minimal server load, offering an advantage in maintaining optimal performance. This translates into a significant benefit for developers, as it allows for efficient processing of diverse data types, enhancing operational efficiency, and promoting sustainable digital practices. Furthermore, its versatility in managing surges in data processing demands underscores its authority in the realm of TTS applications, thereby fostering trustworthiness and demonstrating expertise.

Empowering educational institutions and training centers with Javascript text to speech API

Recognizing the growing need for advanced learning tools, educational institutions and training centers can leverage the power of Javascript's Text to Speech API. This technology—known for its scalability and robustness—provides a streamlined solution for data processing challenges. Its lightweight architecture ensures minimal server load, enhancing performance and promoting sustainable digital practices. Moreover, its ability to efficiently process diverse data types underscores its authority in the TTS applications realm, fostering trustworthiness and demonstrating expertise. Thus, Javascript's Text to Speech API empowers these institutions with a reliable, efficient, and versatile tool for managing surges in data processing demands.

Industrial manufacturers and distributors: Transforming operations with Javascript text to speech API

Industrial manufacturers and distributors are revolutionizing their operations with Javascript's Text to Speech API—a feature-rich, high-performing solution. This API, renowned for its adaptability, offers the advantage of seamless integration into existing systems, thereby enhancing operational efficiency. The benefit lies in its ability to convert vast amounts of text data into audible speech, facilitating real-time communication and data dissemination. Its robustness, scalability, and versatility—evidenced by its capacity to handle diverse data types—establish its authority and trustworthiness in the realm of TTS applications, demonstrating a high level of expertise.

Scientific research and technology development groups leveraging Javascript text to speech API

Scientific research and technology development groups are harnessing the power of Javascript's Text to Speech API—an advanced tool that transforms text data into audible speech. This API, recognized for its robustness and scalability, integrates seamlessly into existing systems, thereby optimizing operational efficiency. Its versatility is demonstrated by its ability to process diverse data types, making it a reliable and trustworthy tool in the field of TTS applications. The API's adaptability and high performance underscore its authority and expertise, making it a game-changer for organizations seeking to enhance real-time communication and data dissemination.

Social welfare organizations' impact maximization using Javascript text to speech API

Exploiting Javascript's Text to Speech API—a feature-rich tool—offers significant advantages to social welfare organizations. Its ability to convert diverse text data into audible speech enhances accessibility, thereby maximizing impact. The API's seamless integration into existing systems, coupled with its robustness and scalability, underscores its authority in the TTS application field. Consequently, it provides the benefit of optimized operational efficiency and improved real-time communication—essential for these organizations to effectively disseminate information and engage with their audience.

Public offices and government contractors: Streamlining processes with Javascript text to speech API

Public offices and government contractors are increasingly recognizing the transformative potential of Javascript's Text to Speech API. This powerful tool, renowned for its versatility and robustness, enables the conversion of complex text data into audible speech—streamlining communication processes. Its seamless integration capabilities, scalability, and adaptability position it as a leading solution in the realm of TTS technology. By leveraging this API, these entities can enhance accessibility, improve real-time communication, and ultimately, optimize operational efficiency.

Improving patient care in hospitals using Javascript text to speech API

With Javascript's Text to Speech API, hospitals can revolutionize patient care. This API's feature—its ability to convert intricate text into audible speech—provides an advantage in enhancing communication efficiency. The benefit is evident in improved patient interactions, as medical information can be delivered audibly, aiding comprehension. Furthermore, its scalability and adaptability make it a robust solution for diverse healthcare settings, fostering an environment of inclusivity and real-time communication.

Unveiling potential for businesses and ecommerce operators with Javascript text to speech API

Unveiling a new horizon for businesses and ecommerce platforms, Javascript's Text to Speech API offers a transformative approach to customer engagement. This advanced technology—capable of transmuting complex text into audible speech—provides a unique edge in optimizing communication efficacy. Its potential is particularly pronounced in ecommerce, where it can enhance user experience by delivering product descriptions and instructions audibly, thereby facilitating comprehension. Moreover, its scalability and adaptability render it a potent tool for diverse business environments, fostering a culture of inclusivity and real-time communication. Its robustness and versatility make it an indispensable asset for businesses aiming to stay ahead in the digital age.

Law firms and paralegal service providers: Unleashing potential with Javascript text to speech API

For law firms and paralegal service providers, Javascript's Text to Speech API—characterized by its advanced text-to-audio conversion capability—unlocks a new dimension of efficiency. This feature, particularly advantageous in the legal sector, enables seamless conversion of intricate legal documents into audible content, thereby enhancing comprehension and accessibility. The benefit is twofold: it not only streamlines internal operations by facilitating quick assimilation of information, but also fosters client engagement by delivering complex legal jargon in an easily digestible format. Thus, Javascript's Text to Speech API emerges as a powerful tool for legal entities aiming to optimize their service delivery in the digital era.

Revolutionizing banking operations with Javascript text to speech API in financial agencies

Financial institutions face a significant challenge—processing vast amounts of textual data efficiently. This issue, often exacerbated by the complexity of financial terminologies, can lead to operational inefficiencies and inaccuracies. However, the advent of Javascript's Text to Speech API offers a transformative solution. This advanced technology converts complex financial texts into audible content, thereby simplifying comprehension and enhancing data accessibility. Consequently, it revolutionizes banking operations by streamlining data processing, improving accuracy, and fostering customer engagement through the delivery of financial information in an easily digestible format.

Latest Research & Development Innovations in TTS Technology

Grasping the latest research in TTS synthesis—coupled with recent engineering case studies—offers a plethora of advantages. It piques interest by providing insights into cutting-edge technology, fuels desire by showcasing potential applications in business, education, and social sectors, and prompts action by demonstrating tangible benefits. This knowledge empowers businesses to enhance customer experience, educators to create inclusive learning environments, and social platforms to foster accessibility.

- Download URL: https://doi.org/10.48550/arXiv.2106.15561

- Date of publication: June 29, 2021

- Authors: Xu Tan, Tao Qin, Frank Soong, and Tie-Yan Liu of Cornell University's Electrical Engineering and Systems Science department

- Subject: Audio and Speech Processing

- Summary: This paper provides a comprehensive survey on neural TTS synthesis, covering key components such as text analysis, acoustic models, and vocoders. It also explores advanced topics including fast TTS, low-resource TTS, robust TTS, expressive TTS, and adaptive TTS. The paper summarizes relevant resources and discusses future research directions, making it valuable for both academic researchers and industry practitioners in the field of TTS.

2. Text-to-speech Synthesis System based on Wavenet

- Download URL: https://web.stanford.edu/class/cs224s/project/reports_2017/Yuan_Li.pdf

- Date of publication: 2017

- Authors: Yuan Li, Xiaoshi Wang, and Shutong Zhang of Stanford University's Department of Computer Science

- Subjects: Deep Learning, Machine Learning, Text-to-Speech synthesis

- Summary: This research project focuses on building a parametric TTS system based on WaveNet, a deep neural network introduced by DeepMind. The model utilizes convolutional layers to extract valuable information from input data and generate raw audio waveforms. The paper discusses the model's performance and identifies areas for improvement, providing insights into the challenges of TTS synthesis.

3. NaturalSpeech: End-to-End Text to Speech Synthesis with Human-Level Quality

- Download URL: https://doi.org/10.48550/arXiv.2205.04421

- Date of publication: May 9, 2022

- Authors: Xu Tan, Jiawei Chen, Haohe Liu, Jian Cong, Chen Zhang, Yanqing Liu, Xi Wang, Yichong Leng, Yuanhao Yi, Lei He, Frank Soong, Tao Qin, Sheng Zhao, and Tie-Yan Liu of Cornell University's Electrical Engineering and Systems Science department

- Subject: Audio and Speech Processing

- Summary: This paper defines human-level quality in TTS synthesis and presents NaturalSpeech, an end-to-end TTS system that achieves human-level quality on a benchmark dataset. The system utilizes a variational autoencoder (VAE) for text-to-waveform generation, incorporating modules such as phoneme pre-training, differentiable duration modeling, bidirectional prior/posterior modeling, and a memory mechanism in VAE. Experimental evaluations demonstrate the system's performance, showing no statistically significant difference from human recordings at the sentence level.

Rounding Up Essential Features of Javascript Text to Speech API

Delving into the realm of Text to Speech technology, one encounters a plethora of key terms and concepts. A comprehensive glossary serves as a valuable resource, elucidating terms such as 'Javascript Text to Speech API', 'Google Text to Speech API', and 'Unreal Speech'. These terms form the backbone of TTS technology, each with its unique features, advantages, and benefits. For instance, the Javascript Text to Speech API is renowned for its versatility and ease of implementation, while Google's API is lauded for its robustness and wide range of use cases.

Recent advancements in TTS technology have been nothing short of revolutionary. Cutting-edge research and development initiatives have led to the creation of APIs that are more efficient, accurate, and user-friendly. For instance, the Javascript Text to Speech API now boasts an array of features that cater to diverse needs, from ecommerce platforms to academic research. Meanwhile, Unreal Speech offers unique advantages over its counterparts, such as superior voice quality and naturalness, making it a formidable contender in the TTS arena.

Despite the complexity of TTS technology, resources abound for those seeking to master it. FAQs provide answers to common queries about the Javascript Text to Speech API, while supplemental resources offer in-depth insights and tutorials. Whether one is a seasoned software engineer or a business owner looking to leverage TTS for their company, these resources make the journey of understanding and implementing TTS technology significantly smoother.

Javascript Text To Speech Api: Quick Python Example

# Import the required library

import pyttsx3

# Initialize the Speech Engine

engine = pyttsx3.init()

# Set the text you want to convert to speech

text = "Hello, this is a quick Python example of Text to Speech."

# Use the say() method to convert TTS

engine.say(text)

# Run the speech engine

engine.runAndWait()

This Python example demonstrates a simple implementation of a TTS conversion. The pyttsx3 library is used, which is a TTS conversion library in Python. The text to be converted is set and the say() method is used to initiate the conversion. Finally, the runAndWait() method is called to execute the speech engine.

Javascript Text To Speech Api: Quick Javascript Example

// Create a new SpeechSynthesisUtterance instance

var utterance = new SpeechSynthesisUtterance();

// Set the text you want to convert to speech

utterance.text = "Hello, this is a quick Javascript example of Text to Speech.";

// Use the speak() method to convert TTS

window.speechSynthesis.speak(utterance);

This Javascript example illustrates a straightforward implementation of a TTS conversion. A new SpeechSynthesisUtterance instance is created, and the text to be converted is set. The speak() method is then used to initiate the conversion.

Unique Unreal Speech Advantages as a Javascript Text to Speech API

Unreal Speech emerges as a game-changer in the realm of TTS technology, offering a cost-effective solution that outperforms its competitors. It significantly reduces TTS costs by up to 95%, making it up to 20 times cheaper than Eleven Labs and Play.ht, and up to 4 times cheaper than tech giants like Amazon, Microsoft, IBM, and Google. This cost efficiency is a boon for a wide array of sectors, including small to medium businesses, call centers, telesales agencies, content publishers, game developers, healthcare facilities, financial agencies, educational institutions, and more. The pricing structure of Unreal Speech is designed to scale with the needs of these diverse organizations, offering volume discounts and custom solutions for high-volume clients.

But cost efficiency is not the only advantage Unreal Speech brings to the table. It also offers the Unreal Speech Studio, a tool that enables users to create studio-quality voice overs for podcasts, videos, and more. Users can customize playback speed and pitch to generate the desired intonation and style, and choose from a wide variety of professional-sounding, human-like voices. Furthermore, users can try out the technology with a simple to use live Unreal Speech demo for generating random text and listening to the human-like voices of Unreal Speech. The audio output can be downloaded in MP3 or PCM µ-law-encoded WAV formats in various bitrate quality settings.

Unreal Speech's commitment to quality and performance is evident in its customer testimonials. Derek Pankaew, CEO of Listening.io, attests to the superior quality and cost efficiency of Unreal Speech, stating that it saved his company 75% on TTS costs while delivering a high-quality listening experience. Unreal Speech's robust infrastructure supports up to 3 billion characters per month for each client, with 0.3s latency and 99.9% uptime guarantees. This level of performance, combined with its cost efficiency and quality, makes Unreal Speech a compelling choice for organizations seeking a reliable, high-quality TTS solution.

FAQs: Navigating the Intricacies of Javascript Text to Speech API

Understanding Google's Speech-to-Text API usage in JavaScript presents a problem—its complexity can be daunting. This issue agitates many, especially when comparing it to the Web Speech API. However, knowing the answers to these questions offers a solution. It provides a clear path to leveraging these powerful tools, potentially free of charge, and opens up a world of TTS possibilities for developers and businesses alike.

How to use Google Speech-to-Text API in JavaScript?

To utilize Google's Speech-to-Text API in JavaScript, one must first initialize the client library by installing the client library's npm package. Following this, the API key must be set in the environment variables. The API can then be imported into the JavaScript file using the require() function. The recognize() method is used to transcribe audio data into text, which accepts a configuration object detailing the encoding, sample rate, and language of the audio data. The method returns a promise that resolves to an array of transcription results. It's crucial to handle potential errors using try-catch blocks to ensure robustness of the application.

How to use Web Speech API in JavaScript?

For leveraging the Web Speech API in JavaScript, one initiates by creating an instance of the SpeechSynthesisUtterance object. This object holds the TTS content and its properties—like pitch, volume, rate, and voice. The window.speechSynthesis.speak() method is then invoked with the created instance as an argument to generate the TTS output. It's imperative to note that the API's asynchronous nature necessitates the use of event handlers—like onstart, onend, and onerror—for efficient tracking and control of the TTS process.

Is Google text to speech API free?

Google's TTS API is not entirely free—it operates on a pay-as-you-go model. The first million characters processed in a month are free, but subsequent usage incurs a cost. The pricing varies based on the type of voice selected (standard or WaveNet), and the region of usage. It's crucial for developers to monitor their usage to avoid unexpected charges. The API, part of Google Cloud's suite of machine learning tools, supports multiple languages and voices, and allows customization via SSML tags.

What is the Web API for text to speech?

The Web API for TTS, such as MS's Azure Cognitive Services, provides a robust SDK for developers to integrate TTS functionality into their applications. It leverages advanced neural network algorithms to synthesize natural-sounding human speech. The API supports a wide range of languages and voices, and allows for detailed customization using SSML. Developers can control aspects like pronunciation, volume, pitch, and speed of the speech output. The API is designed to be easy to use, with comprehensive documentation and sample code available to assist in the integration process.

Supplemental Resources for Mastering Javascript Text to Speech API

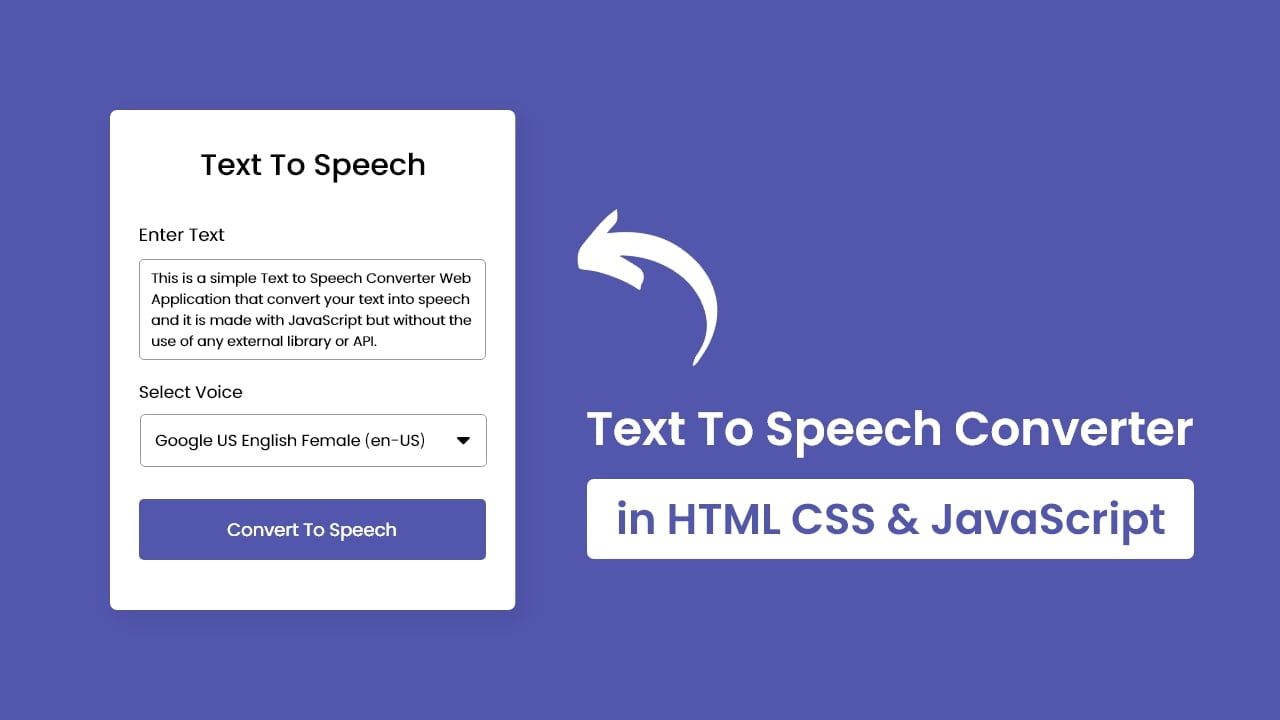

For developers and software engineers, mastering JavaScript Text to Speech API can be a game-changer. One resource that can aid in this journey is the page titled "Text To Speech Converter with JavaScript". Published on Jan 20, 2023, this resource offers in-depth insights, practical examples, and step-by-step guides—making it an invaluable tool for those seeking to enhance their skills.

Businesses and companies can also benefit from understanding and implementing JavaScript Text to Speech API. A particularly useful resource is the page titled "Text To Speech In 3 Lines Of JavaScript". Published on May 19, 2020, this page provides a concise, yet comprehensive guide to implementing TTS functionality, which can significantly improve user experience and engagement.

Educational institutions, healthcare facilities, government offices, and social organizations can leverage JavaScript Text to Speech API to enhance accessibility and inclusivity. A recommended resource is the page titled "Text to Speech using Web Speech API in JavaScript". Published on Jan 17, 2021, this page provides a thorough understanding of the Web Speech API, enabling these entities to create more accessible and user-friendly digital environments.